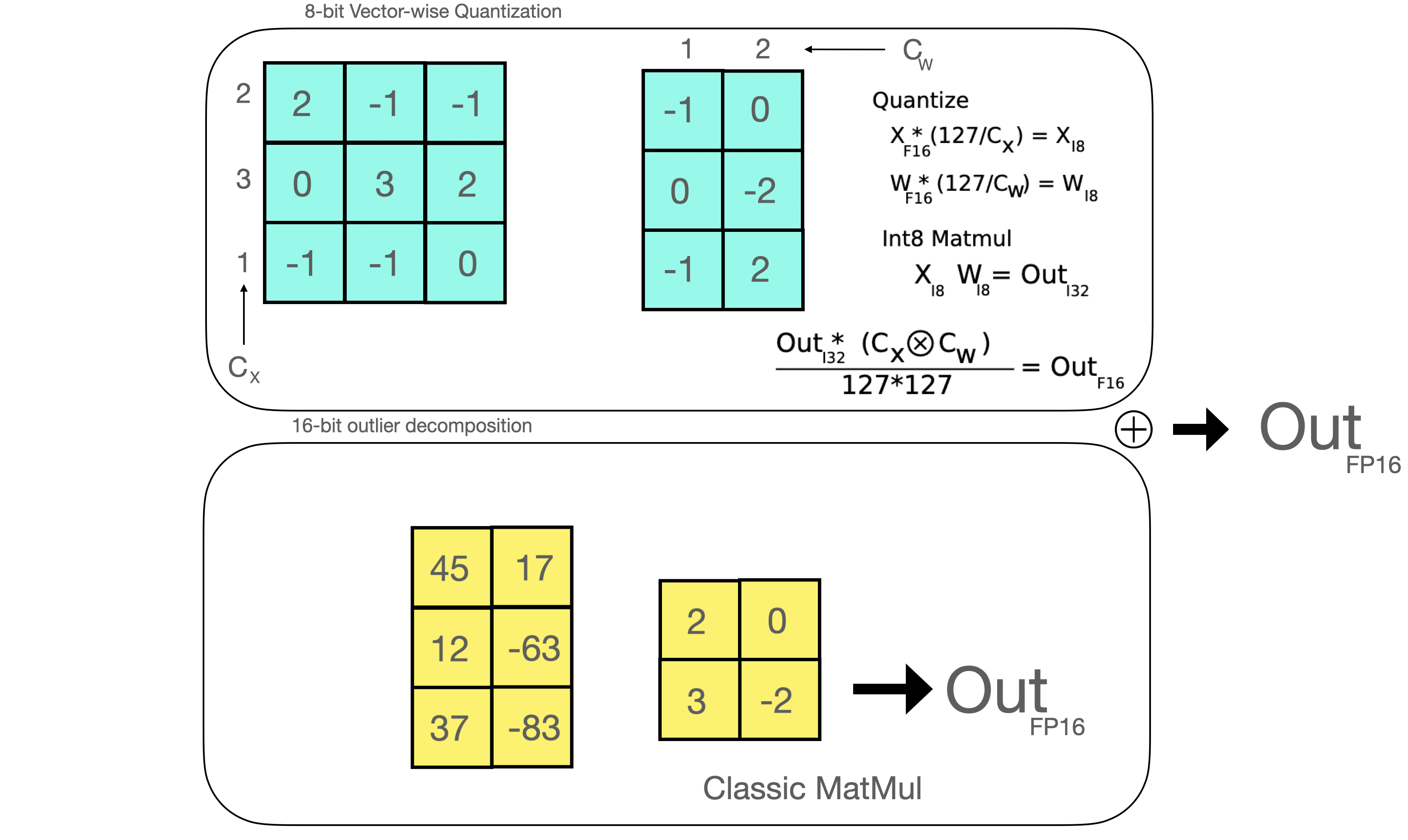

A Gentle Introduction to 8-bit Matrix Multiplication for transformers at scale using transformers, accelerate and bitsandbytes

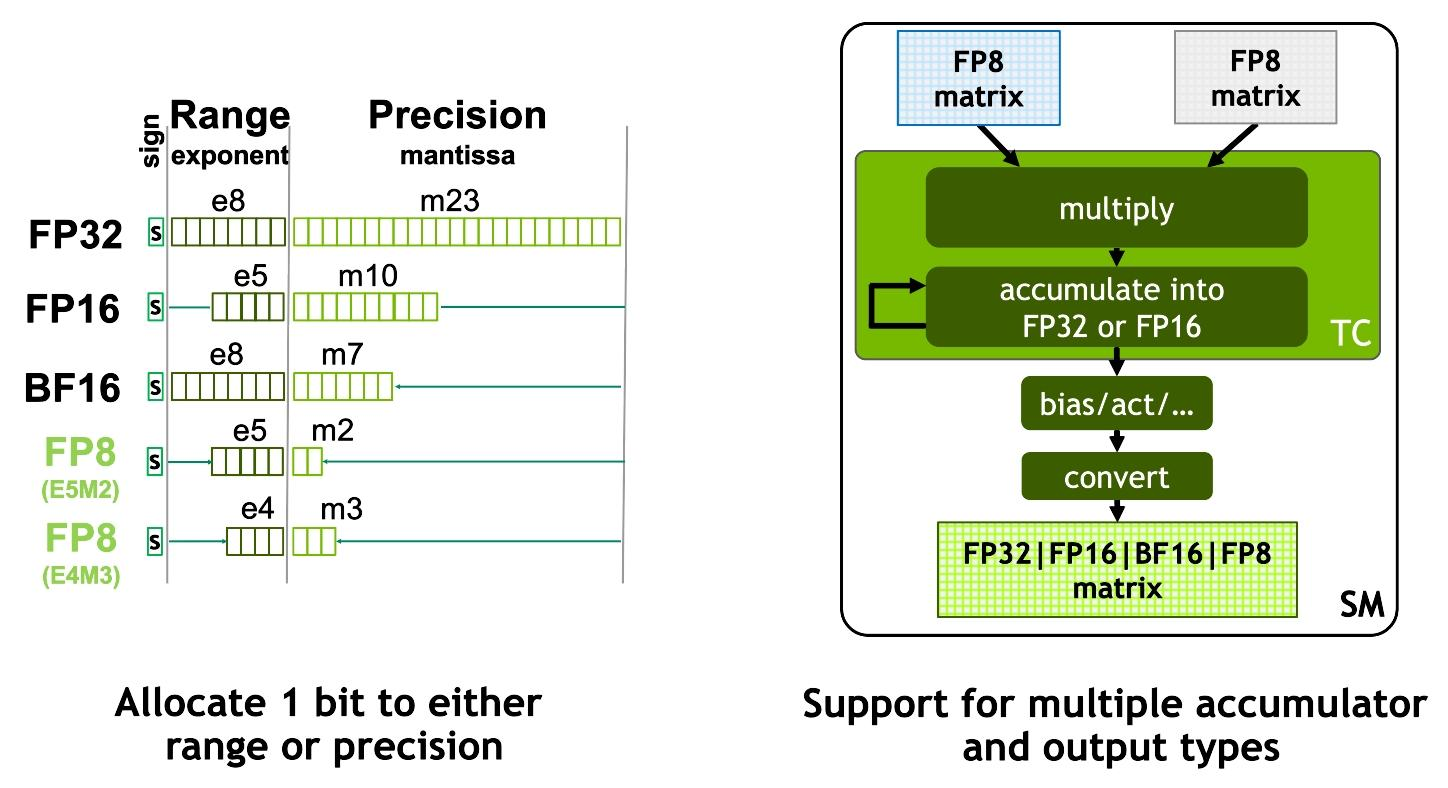

New cuBLAS 12.0 Features and Matrix Multiplication Performance on NVIDIA Hopper GPUs | NVIDIA Technical Blog

Attention module: Softmax and MatMul represent softmax operation and... | Download Scientific Diagram

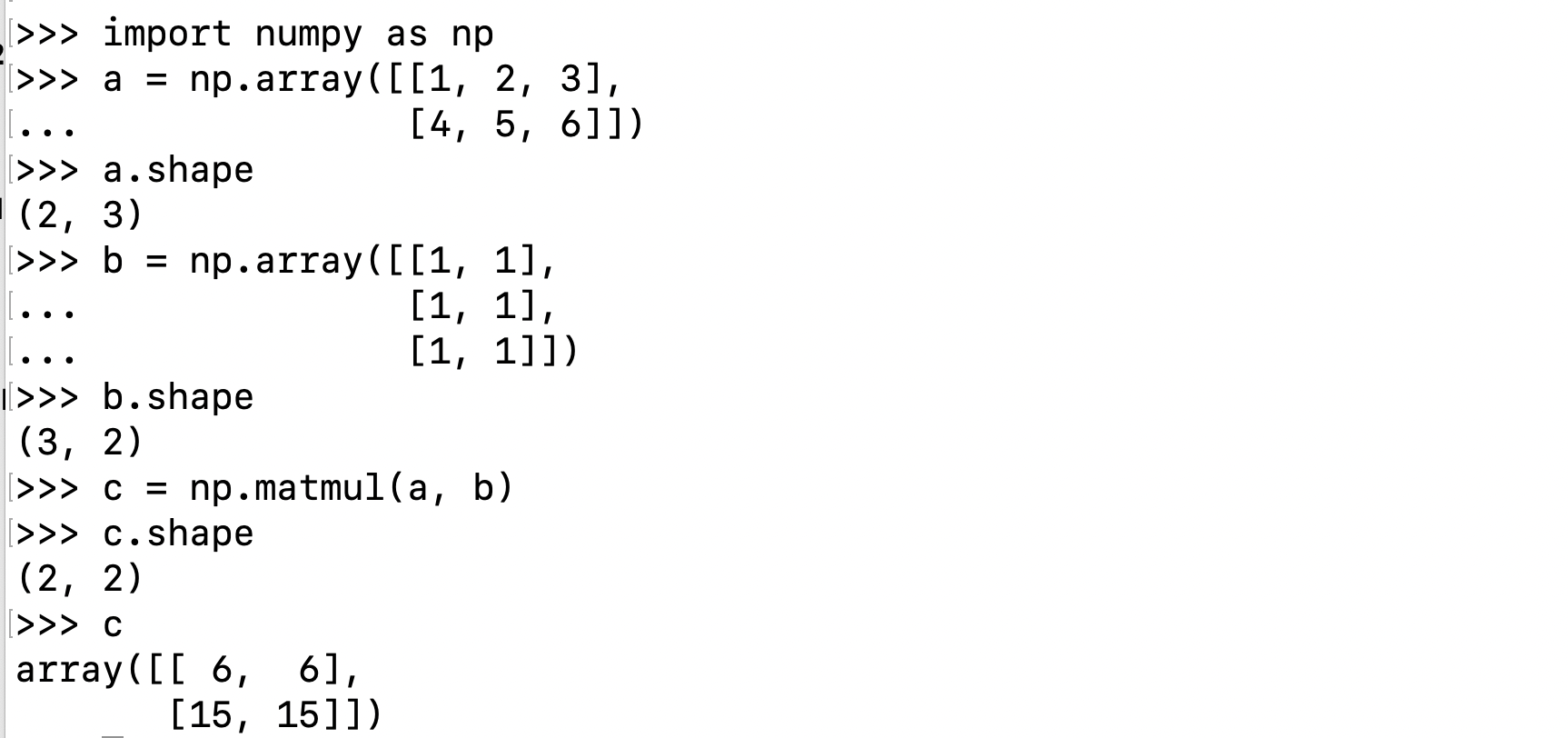

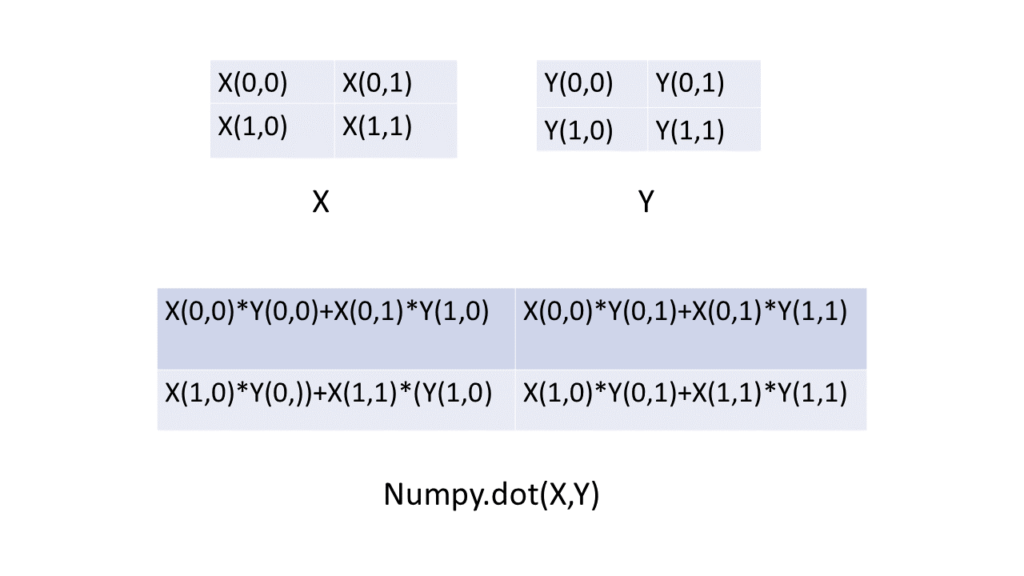

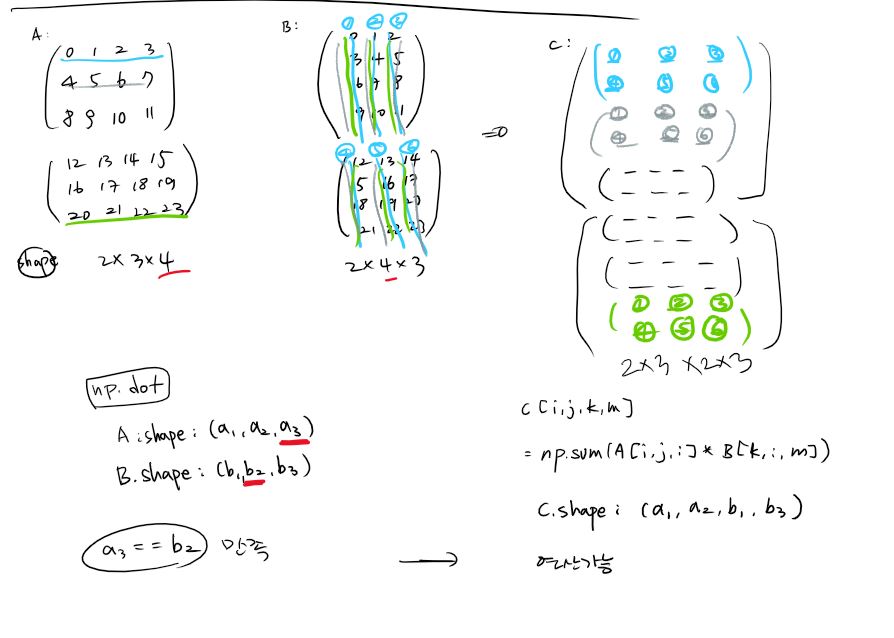

![Python]np.dot과 np.matmul의 차이 Python]np.dot과 np.matmul의 차이](https://blog.kakaocdn.net/dn/d3pSkJ/btreRSaASOL/RsZrfv86K2FJg3thz9HXrk/img.png)

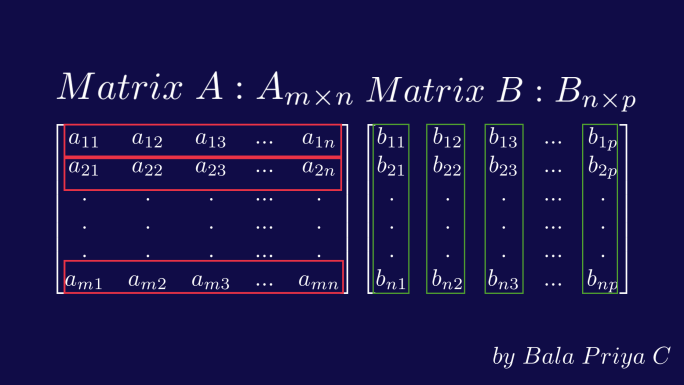

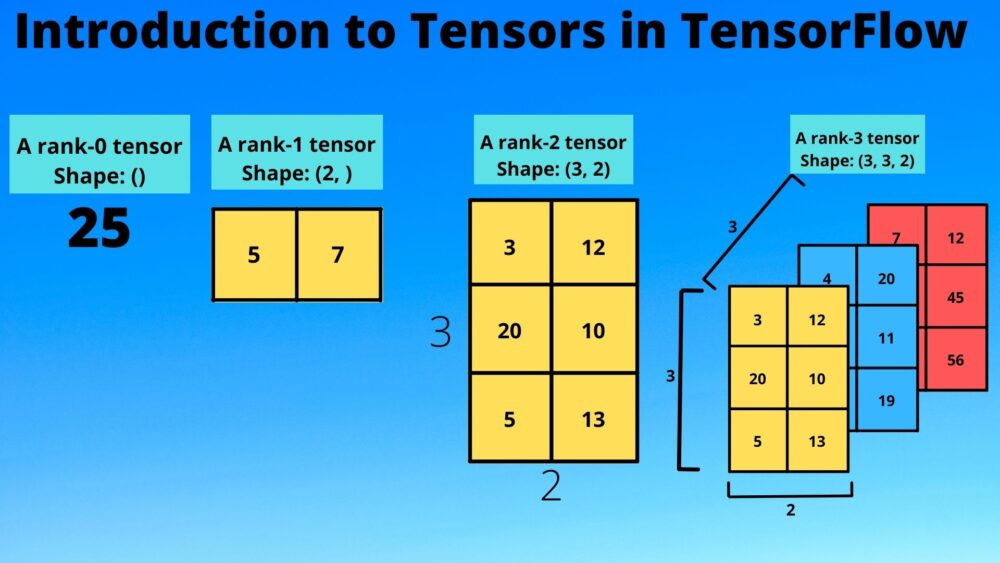

![NumPy Matrix Multiplication — np.matmul() and @ [Ultimate Guide] – Be on the Right Side of Change NumPy Matrix Multiplication — np.matmul() and @ [Ultimate Guide] – Be on the Right Side of Change](https://blog.finxter.com/wp-content/uploads/2021/01/matmul-1024x576.jpg)